ML - Regression problem

A simple regression example - 2D space y = ax + b.

This is a simple 2D regression as it help visualize the problem and the solution. This will be suitable for multiple inputs and outputs which is difficult to visualize in our limited 3D space.

The equation will be y = -5 * x +0.1 * random numbers between -5 and 5.

import torch

import matplotlib.pyplot as plt

#Data input

x = torch.arange(-5, 5, 0.1).view(-1, 1)

y = -5 * x + 0.1 * torch.randn(x.size())

#Define the model backbone

model = torch.nn.Linear(1, 1) #Model type - Linear

criterion = torch.nn.MSELoss() #measure the losses - Mean Squared Error between iput and target

optimizer = torch.optim.SGD(model.parameters(), lr = 0.1) #Stochastic gradient desent - derivative to find the minimum

The function to train the model:

def train_model(iter):

for epoch in range(iter):

y1 = model(x) #Put x into the model

loss = criterion(y1, y) #Calculates the loss

optimizer.zero_grad() #Finds the minimum

loss.backward() #Computes the dloss/dx

optimizer.step() #Updates the value of x using gradient optimizer.zero_grad

net = train_model(10) # 10 iterations are enough to get the regression

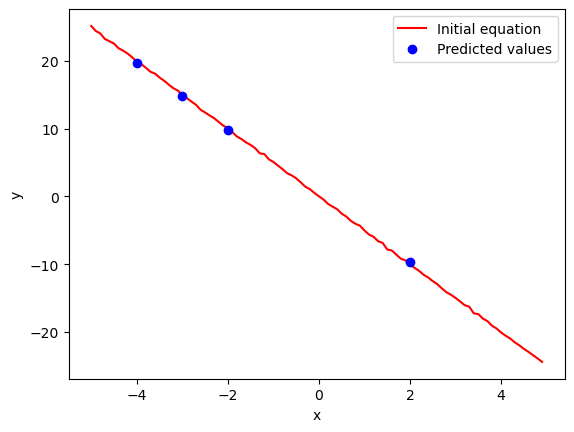

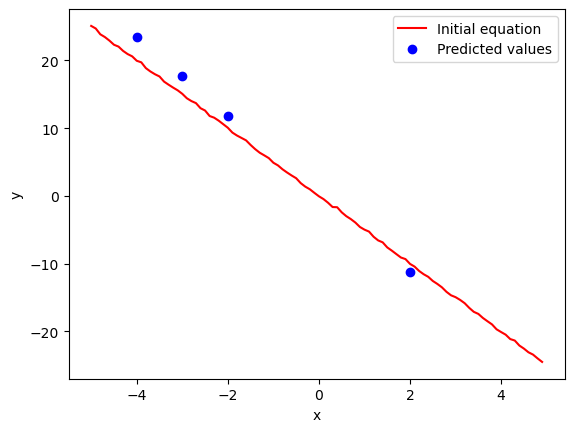

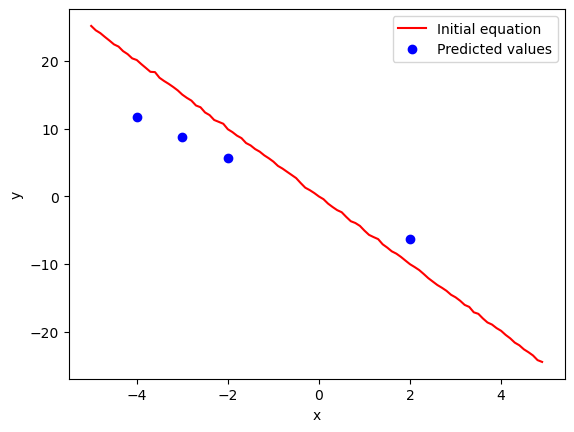

Let’s test the model for -4, -3, -2 and 2 plot against the linear equation.

#Test the model with one value -4

X = torch.tensor([[-4.0], [-3.0], [-2], [2]])

yhat = model(X) #Calculates y for x=-4 # yhat is the local minimum of a diferentiable funtion

plt.plot(x, y, 'r-')

plt.plot(X, yhat.detach(), 'bo')

plt.show()This is the plot after 10 iterations:

And after only 5 iterations:

How about two iterations:

No comments to display

No comments to display